Classification

Triangulation:

- Passive 3D measurement

- Passive binocular measurement

- Active 3D measurement

- Line structured light

- Laser triangulation

- Surface structured light

- Spatial encoding

- Sequential encoding

- Line structured light

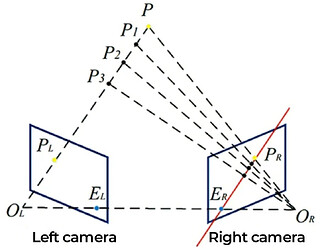

The principle of triangulation

See the picture above: point P in the real world (3D) and its projections PL and PR in two 2D cameras form a triangle. Therefore, we can find point P’s 3D position by measuring the lines and angles of the triangle.

But before finding the position, we need to know:

- Geometric properties of two cameras’ measurement reference frames.

- Two cameras’ positions and poses.

- Two cameras’ corresponding reference points.

Optical 3D measurement is non-contact, making it suitable for remote sensing and other non-contact scenes. There are primarily two approaches to 3D optical measurement: the passive method and the active method.

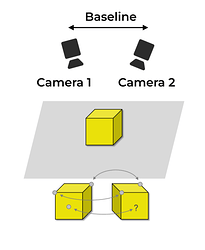

Passive 3D measurement: Passive binocular measurement

Passive 3D measurement depends on physical properties (like textures and colors) to rebuild a 3D model.

Principle

The passive way uses ambient light rather than specialized illumination. Based on the distance information from 2D images obtained from one or several viewing angles, we have the object’s 3D point cloud information.

Advantages

- Less equipment required (two cameras).

- Adaptable.

- Integrated with hardware.

- Easy to calibrate.

Disadvantages

- Complex calculation and algorithms.

- Easily affected by ambient light.

- Lower accuracy when dealing with objects that have indistinct contours or corners.

Applications

- Scenes where specialized illumination cannot be used, like outdoor activities.

- Scenes where accuracy is not the first priority, like vehicles’ crude navigation in controllable or complicated environments.

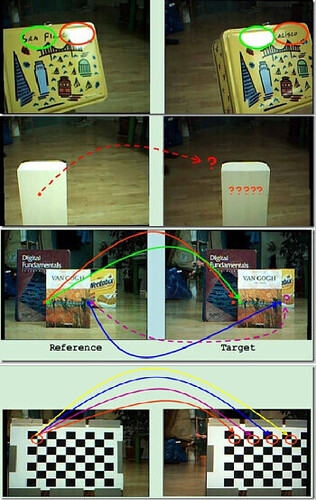

For passive 3D measurement, it is hard to recognize reflective objects and objects without clear textures:

See the picture above in Stereo Vision: Algorithms and Applications, Stefano Mattoccia (Stefano Mattoccia — Università di Bologna — Home Page).

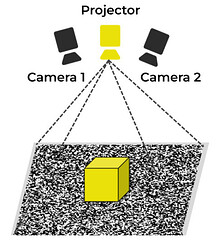

Active 3D measurement

Active 3D measurement resembles passive binocular measurement, but it is not dependent on physical properties. In this method, a random pattern projector (RPP) projects known signals on the object’s surface, addressing the common issue of “lower accuracy when dealing with objects that have indistinct contours or corners” encountered in passive binocular measurement.

Advantages

- The method can measure texture-less objects.

Disadvantages

- The method can only measure short distances.

- The method is easily affected by environmental conditions and sunlight.

Corresponding relationships are basically established by finding emitted information from camera-captured pictures. Researchers experimented on different types of emitted information, including that of a single point, a single line, and even fixed or predefined structures.

A schema of active 3D measurement:

Line structured light

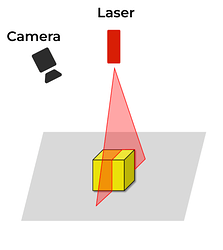

Laser triangulation

In this method, lasers scan the object first. Meanwhile, the camera, placed in a certain position, captures images of distorted lasers. After that, position information on certain points or lines is used to rebuild the 3D model of the object.

Principle

Lasers projected onto a 3D object produce lines of illumination that will appear distorted from the camera’s perspective. Analysis of these lines—whether they were distorted or not—can then be used to produce 3D point clouds, thus accurately reconstructing the object’s surface shape.

Advantages

- Laser sources are robust in most environments.

- The measurement principle is simple.

- The method is highly customizable.

Disadvantages

- Repeated scanning is slow.

- The method lacks color information.

- The method is unsuitable for measuring bright and dark objects.

Applications

- Conveyors.

A schema of laser triangulation:

Surface structured light

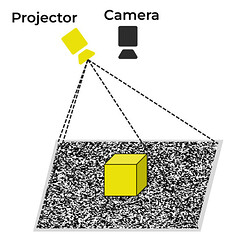

Structured light technology evolved out of passive binocular measurement. It imitates human eyes to obtain 3D information and now it is one of the most reliable technologies in 3D measurement.

By replacing a camera with a projector, structured light technology does not need to find corresponding points, which is indispensable to passive binocular measurement. Like laser triangulation, structured light technology rebuilds models by analyzing distortions of known illumination patterns projected on static 3D objects. Since this technology rebuilds the whole 3D model, it is also known as full-field measurement.

Principle

Projecting known patterns, and distortions of these patterns provide depth information.

Advantages

- Independent of the object’s textures.

- Only one camera used to avoid corresponding.

- Structured modes make low density of data extraction processors.

- Fast.

- Regional communication.

Disadvantages

- Like passive binocular measurement, this method has problems in block average, resolution, and accuracy.

- Like laser triangulation, in this method, specular, bright, dark, and light-absorbing surfaces all result in missing data and measurement errors.

The disadvantages above could be overcome by spatial modulation and sequential modulation.

A schema of surface structured light:

Spatial encoding

Spatially encoded structured light refers to projecting mathematically encoded structured light, of which light spots inside the window are random, on the space to be measured. The code value of a point comes from its neighborhood, and the window’s size determines the rebuilding’s accuracy.

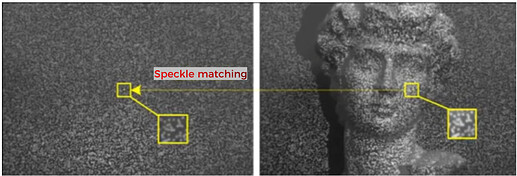

Speckle structured light (light coding)

Speckles, or pseudo-random speckles, are white speckles in structured light patterns. As a kind of spatially encoded structured light, speckles are now usually used in binocular stereo vision to enrich texture information, so that parallax errors caused by unclear or coinciding textures will be corrected. A speckles’ binocular stereo vision system consists of two cameras and a random speckle projector.

Principle

Sizes and shapes of speckles projected on the object depend on the distance between the camera and the object and their relative positions. Depth information can then be calculated by the sizes and shapes.

Advantages

- Adjustable speckles’ sizes, quantity, and their center’s position.

- Lower noise.

- Simple calculation.

- Low power consumption.

- High accuracy in short measurement distances.

- Only only one picture required.

- Real-time processing.

Disadvantages

- The method is easily affected by environmental conditions and sunlight, thus it is not suitable for outdoor working.

- The accuracy is low when measurement distances are long.

- Specular and bright surfaces lead to missing data and measurement errors.

Applications

- Face and gesture recognition. For example, iPhone X’s TrueDepth camera is based on the “Light Coding” technology of PrimeSense, an Israeli firm; Microsoft’s first-generation Kinect detects gestures for man-machine interaction.

A schema of speckle structured light:

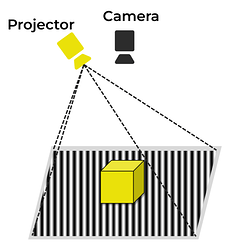

Sequential encoding

The projector projects structured light of different brightness sequentially. Meanwhile, the camera captures the object in each projection. Each pixel corresponds to an exclusive binary encoding. In binocular measurement, we were searching pixels for matching, while in sequential encoding, we are searching pixels with the same binary encoding.

In this 3D measurement technology—sequentially encoded structured light—spatial methods and sequential methods are combined. Instead of projecting a single pattern, a series of unique patterns are projected, and the camera captures multiple images throughout the entire projection process. The light intensity of each pixel varies over time, and information from all pixel intensities is used to establish the corresponding relationships between camera pixels and projector pixels.

Principle

Projecting stripes on the object, and depth information is provided by distortions in the scene.

Advantages

- Patterns are sequentially encoded and processed through each pixel.

- In this method, there is no need to analyze pixels’ neighborhoods.

- In this method, there is no block average and less spatial resolution loss.

- The method can measure textureless objects.

- The method is the best combination of spatial and sequential methods.

- Time is used as the benchmark.

- Accuracy and precision are both 100 times higher than those of other technologies, making it ideal for 3D machines’ vision.

Disadvantages

- Capturing a series of pictures is time-consuming.

- The object and the camera should remain static during data collection.

Applications

- Quality assurance: measuring objects’ sizes and detecting their flaws.

- Reverse engineering.

- Robot SLAM (simultaneous localization and mapping).

A schema of sequentially encoded structured light: